It’s getting down to the wire (or should I say EEG electrode) to get this project ready for performance in the upcoming Art-A-Hack Final Presentations on July 15th. This past week’s meeting, Gabe, Pat, and Gal brainstormed further on how to work with OpenBCI EEG data in Processing to create what will be the real-time projected visuals for Dual Brains.

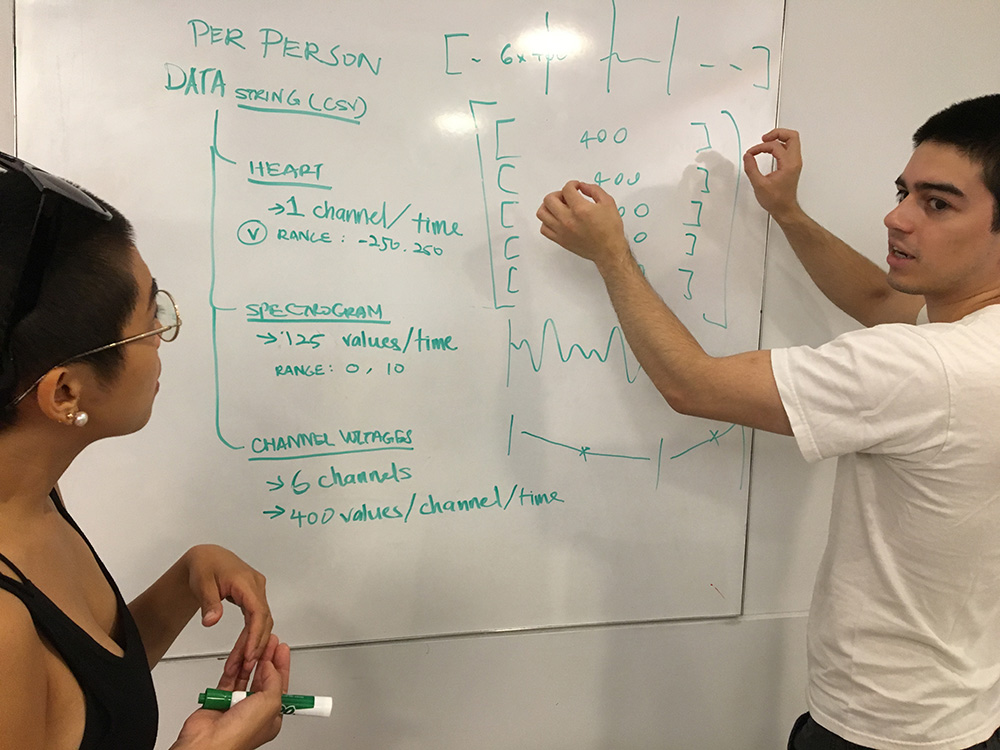

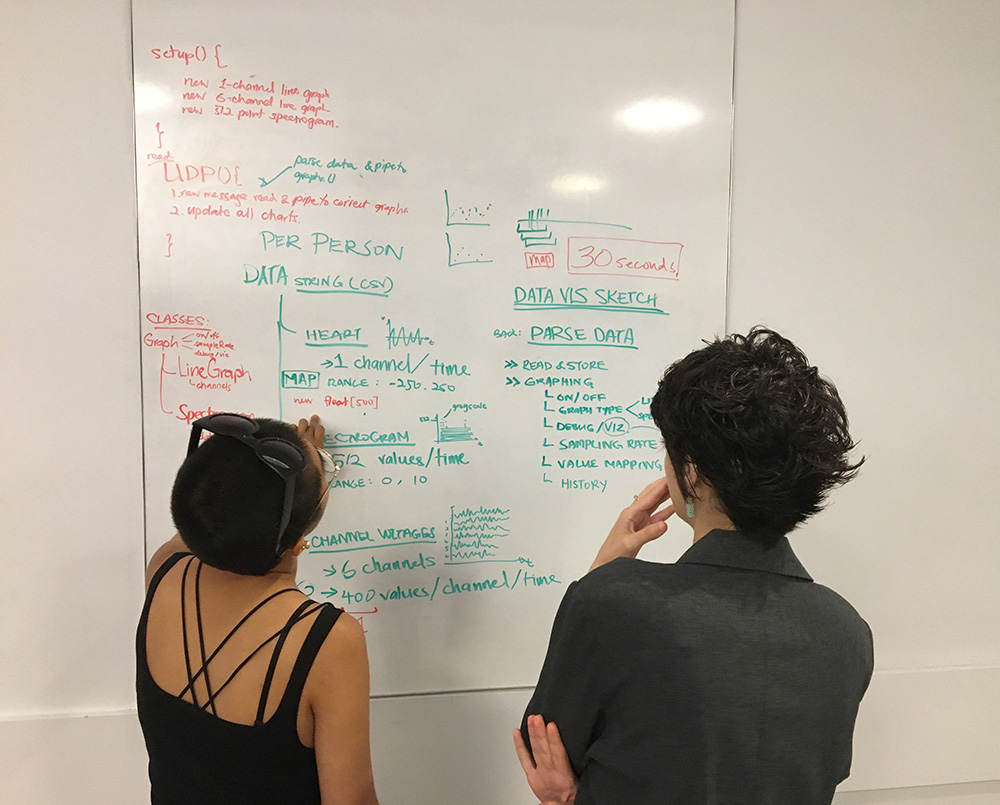

Here they are above determining what data sets at what frequency should be sent over to Processing, no small feat since OpenBCI creates raw data which must then be analyzed in real time. This was Gabe’s department!

A key consideration was figuring out the right amount of information to use in order to create compelling visuals, but which also works within computing limitations. We aim to have everything run smoothly.

Data collected will be from six EEG channels per person, ECG heart data, and we were hoping perhaps also EMG data, though that may prove to be too much to do in this short time frame.

Meanwhile, we had sound to work out, too. Aaron and Gabe had discussed ways to work with brain frequencies, which are quite low (we expect probably between 8 and 20-something Hz). We also thought we could use the ECG data so the audience would experience the performers’ heart functions.

Fingers crossed that in the next few days, we can bring this all together–equipment, programming, visuals, sound–and make Dual Brains performance happen!